High-level event segmentation: towards a systematic model for mixed-methods research in AI and Visual perception

September 8, 2020·

Vipul Nair

Abstract

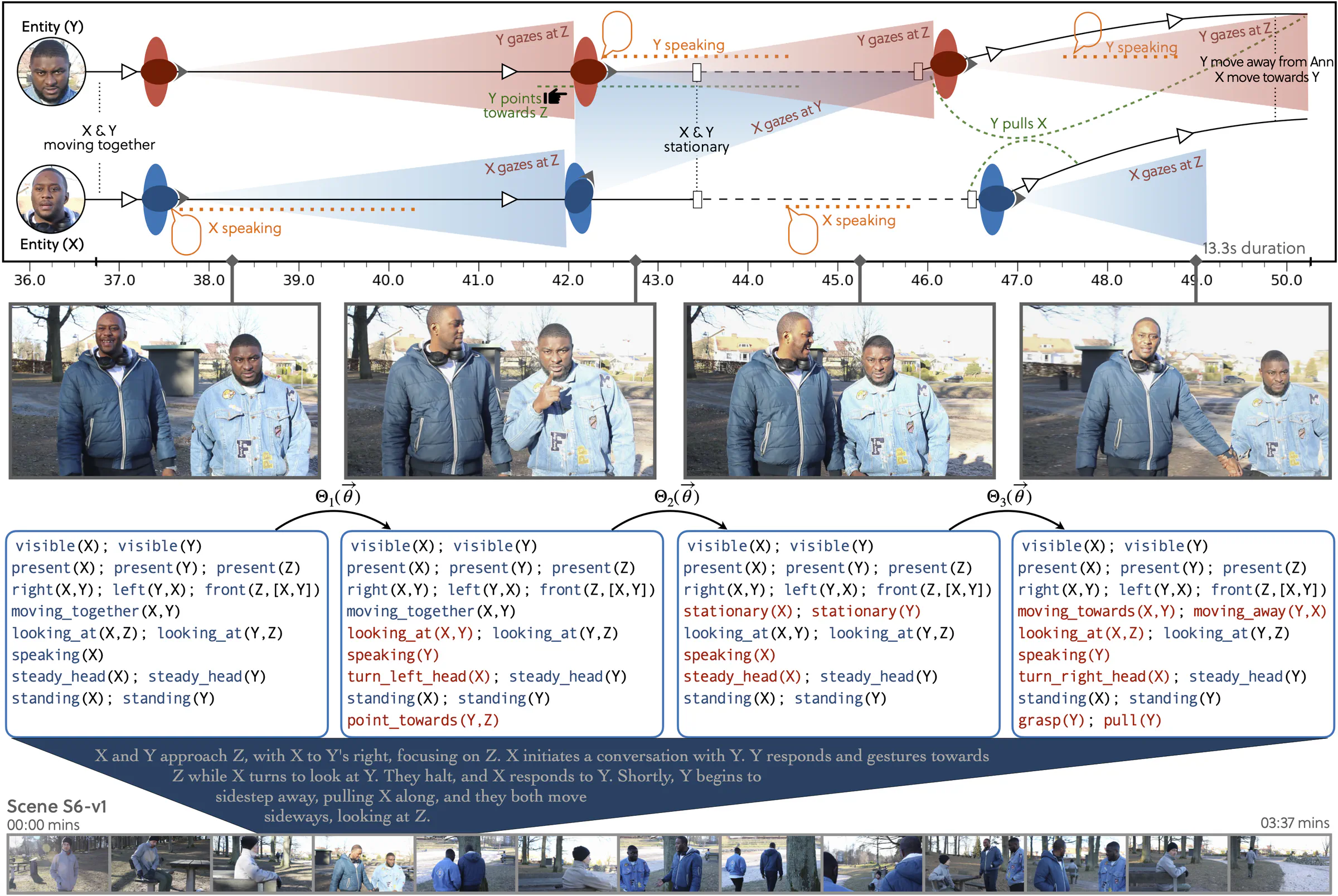

The talk presented a high-level event segmentation framework aimed at developing a systematic model for mixed-methods research in AI and visual perception. It drew on multiple research projects examining how visuoauditory cues — including gaze, speech, motion, and hand actions — guide visual attention during passive observation of naturalistic human interactions. Central emphasis was placed on integrating a structured event model with eye-tracking data to study attention within ecologically valid interaction scenarios, and to characterize how these cues operate both independently and in combination. The findings demonstrated robust intra- and cross-modal attentional effects, highlighting how perceptual and interactional signals jointly shape attentional allocation in real-world contexts. The proposed framework illustrates a principled approach for linking event structure, multimodal behavior, and attention, with implications for cognitive modeling, human-centered AI, and the design of interactive systems.

Type

Publication

Artificial and Human Intelligence Workshop at the 24th European Conference on Artificial Intelligence, Santiago de Compostela, Spain, Sept 2020 (Workshop ECAI 2020)*