Similarity judgments of hand-based actions: From human perception to a computational model

August 25, 2019·

Paul Hemeren

Vipul Nair

Elena Nicora

Alessia Vignolo

Nicoletta Noceti

Francesca Odone

Francesco Rea

Giulio Sandini

Alessandra Sciutti

Image credit: Authors (this study)

Image credit: Authors (this study)

Abstract

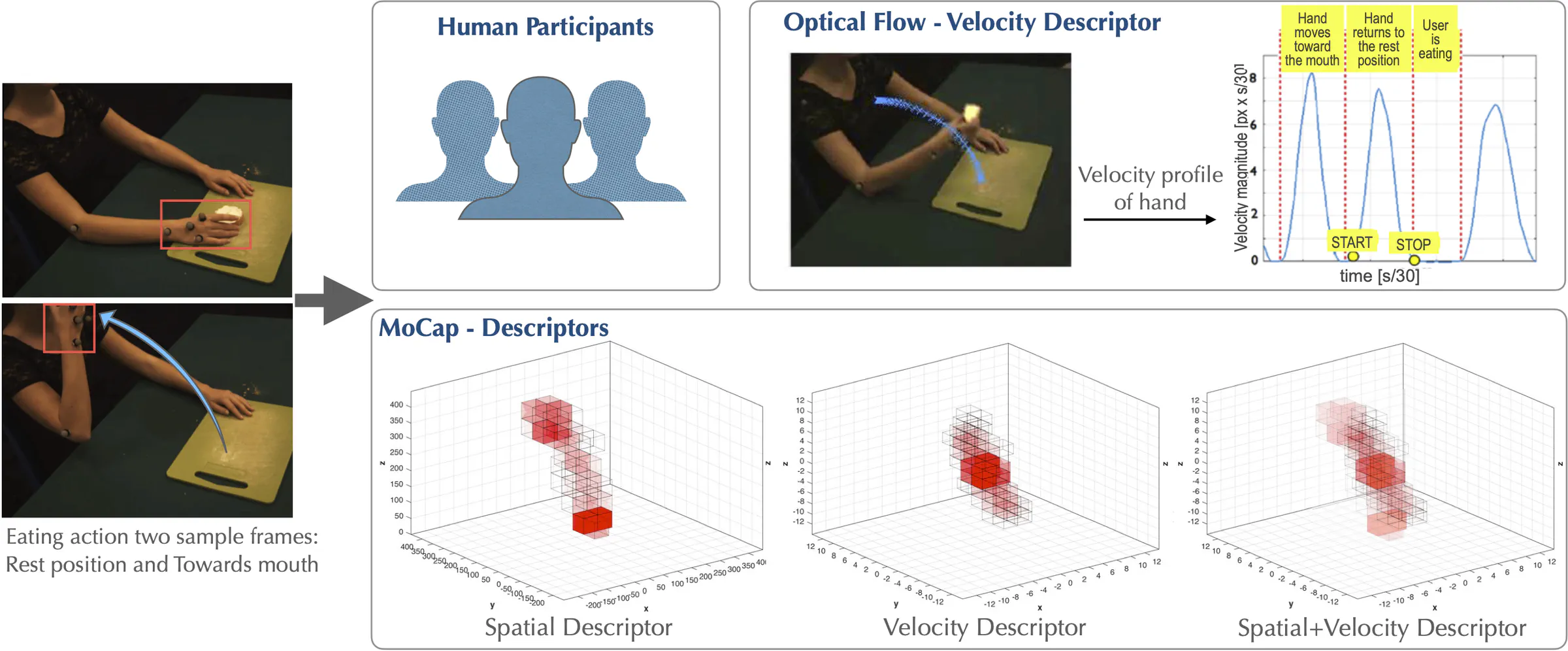

How do humans perceive actions in relation to other similar actions? How can we develop artificial systems that can mirror this ability? This research uses human similarity judgments of point-light actions to evaluate the output from different visual computing algorithms for motion understanding, based on movement, spatial features, motion velocity, and curvature. The aim of the research is twofold:(a) to devise algorithms for motion segmentation into action primitives, which can then be used to build hierarchical representations for estimating action similarity and (b) to develop a better understanding of human actioncategorization in relation to judging action similarity. The long-term goal of the work is to allow an artificial system to recognize similar classes of actions, also across different viewpoints. To this purpose, computational methods for visual action classification are used and then compared with human classification via similarity judgments. Confusion matrices for similarity judgments from these comparisons are assessed for all possible pairs of actions. The preliminary results show some overlap between the outcomes of the two analyses. We discuss the extent of the consistency of the different algorithms with human action categorization as a way to model action perception.

Type

Publication

In 42nd European Conference on Visual Perception (ECVP) Leuven, Belgium, August 25-29, 2019, vol. 48, pp. 79-79. Sage Publications, 2019